7 Cheeses, 3 AI Tools, 1 Epic Mother’s Day Picnic

The Arnold Arboretum lets you picnic exactly one day a year. It's called Lilac Sunday and it’s on Mother’s Day. Our two families have done Mother's Day together for three years, but this was our first time at the Arboretum, and the bar was clear: our picnic had to be epic.

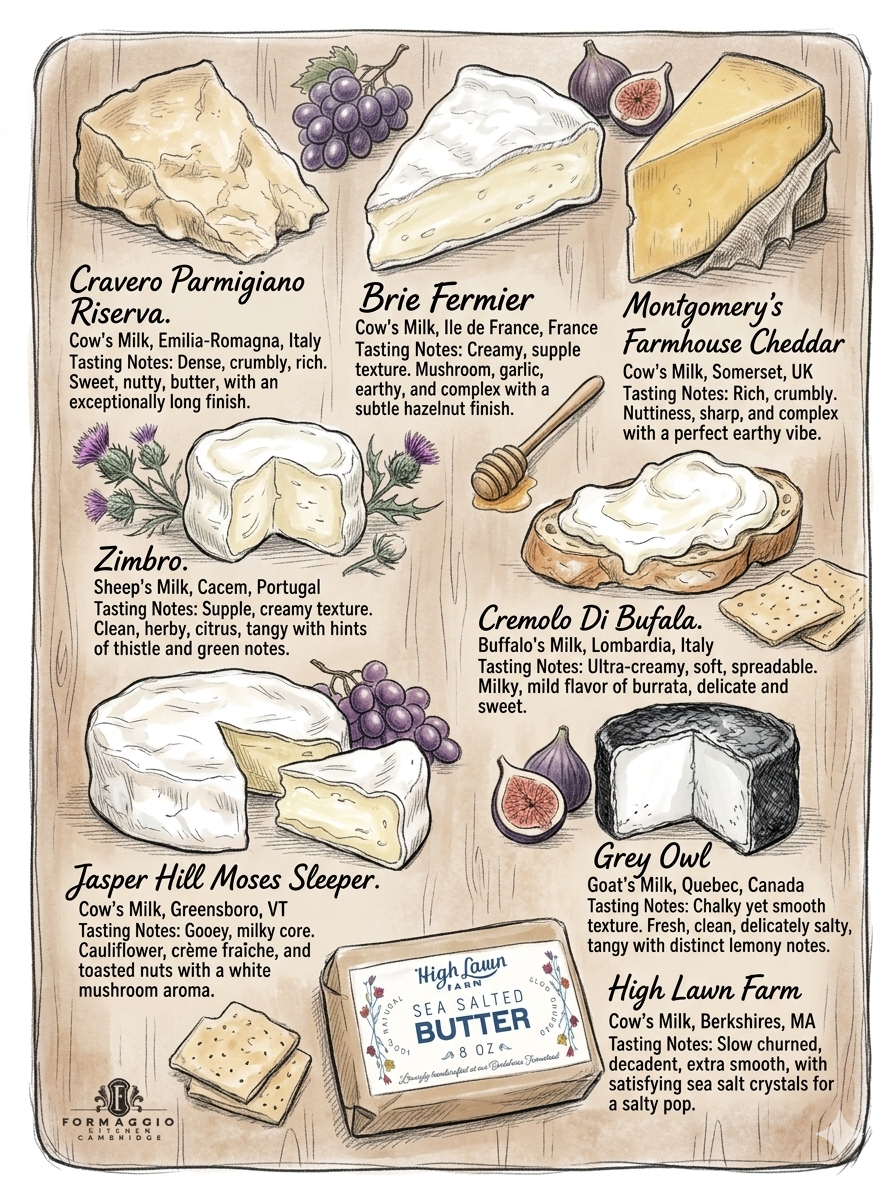

So we bought seven cheeses from Formaggio Kitchen. Plus olives, honey, mostarda, a few jams. Everyone in our group has opinions and so seven was probably the minimum to ensure the right cheese gets to the right person. Rex is a parm-and-cheddar guy. Noa is a strong brie. I want the weird one and a chevre. The other family has their own loyalties.

I wanted a printed guide on the blanket. Something the kids could point at. Something nobody had to look up on a phone mid-picnic.

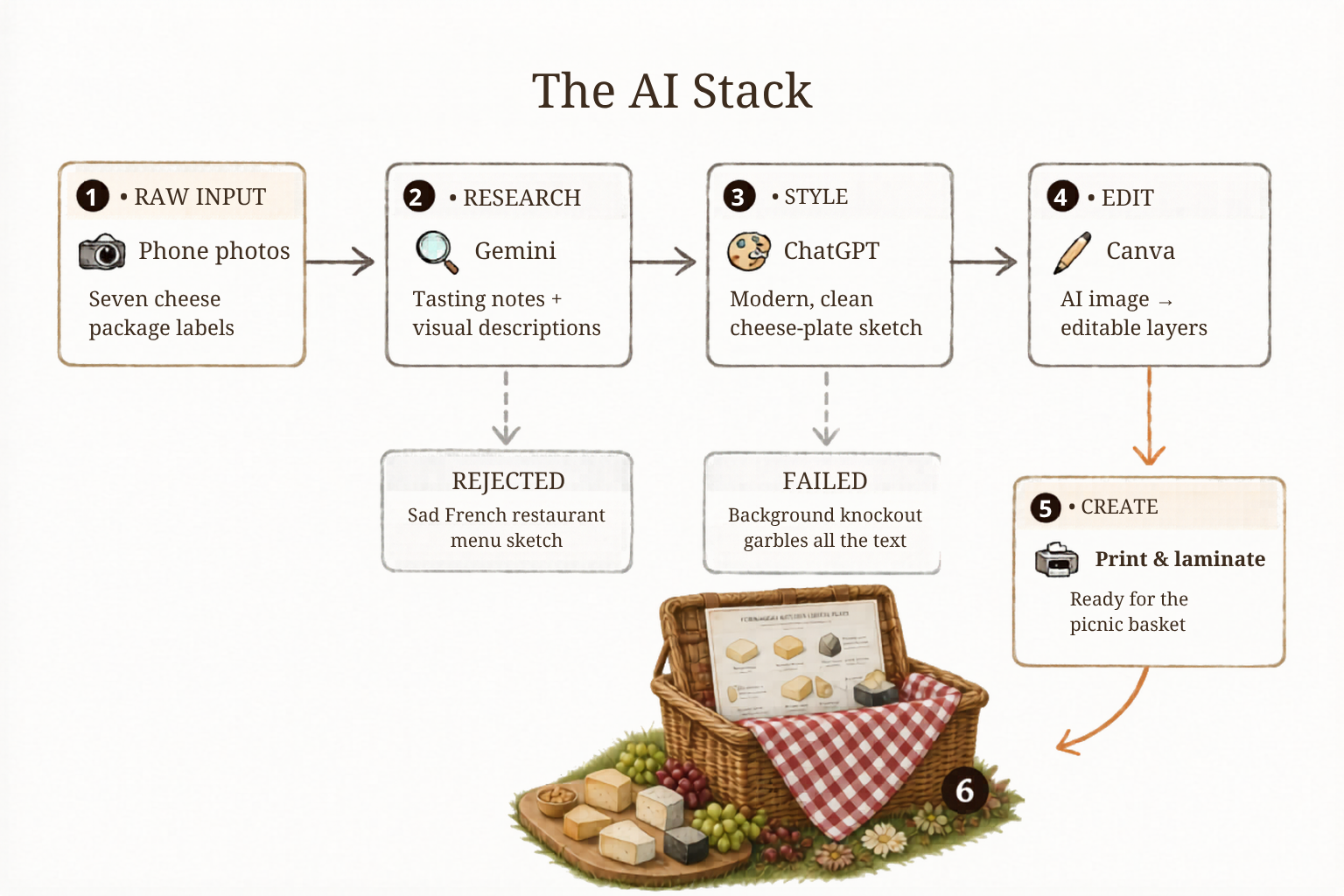

The orchestration

Here is the thing nobody talks about with AI: AI can only be good at one thing at a time, and you don't know which thing until you hit the wall. The work isn't prompting. The work is noticing the wall, naming what you actually need next, and knowing which tool does that one specific thing well. It's iterative — every step starts from the previous tool's output and asks: what's missing, and who can fix it? Let me walk you through it.

Wall #1: I need to know what these cheeses are.

Formaggio sells beautiful cheeses with cryptic names. I'm not going to type out seven labels. → I took photos of the packages and handed them to Gemini. Gemini is great at "look at this, tell me what it is." It read every label, identified each cheese, and wrote tasting notes. Then, unprompted, it wrote visual descriptions of each cheese — clearly written as if to be fed into an image generator. I didn't ask. It just understood that this was probably my next step. Beautiful.

Wall #2: I need an actual image.

Gemini offered to render the cheese plate itself. I let it try. The result looked like the menu of a French restaurant that has been open for too long and has stopped trying.

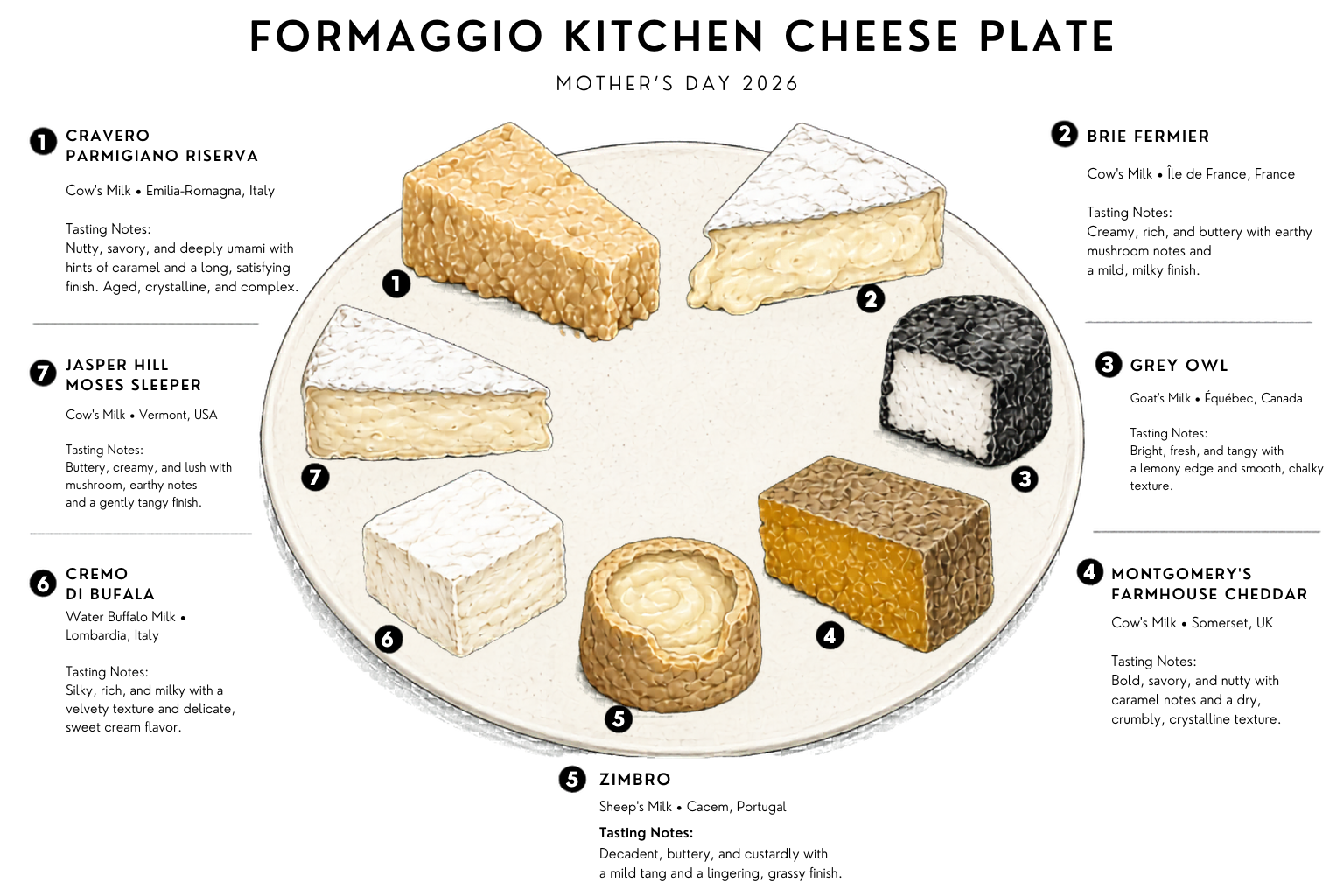

Gemini is not the right tool for this. The trick is recognizing that quickly and not spending forty-five minutes coaxing it. Normally I'd reach for nanobanana here — it's the best image renderer I've used and I will die on that hill — but I'd been hearing the hype about ChatGPT Images 2.0, OpenAI's new image model that supposedly nails the thing image models always fluff: small text and clean typography. So I copied Gemini's visual descriptions over and gave it a shot. The hype was real. After a few rounds: a clean, modern, top-down plate of seven cheeses. Recognizably my cheeses. Style I liked. Crisp little numbered labels that were, miraculously, real words.

Wall #3: I need to change one small thing.

The image had a colored background that would print as stripes. So I asked ChatGPT to knock it out. It could not. The model whose whole headline feature is "we finally fixed text in images" immediately re-hallucinated every single label the moment I asked for an edit. Every "edit" was a full re-render — slightly different plate, slightly different cheeses, completely fictional typography. "PARMIGIANO RISERVA" became "PARMISIANO RISERVA." "Grey Owl" became "GEEV OWL." "Montgomery's Farmhouse Cheddar" became, and I quote, "MONFROVIERY'S FARMFIOUSS CHEDDAR." The serving tip became, I think, Romanian.

This is the trap. You can spend an hour trying to convince an image model to make a small targeted edit, and it will never work, because that's not what image models do. They render whole images. They roll the dice on every regeneration. Text is the part where the dice roll worst. → The move here isn't a better prompt. The move is recognizing you need a different category of tool: not a generator, an editor.

The fix: Canva.

Canva has a feature that takes an AI-generated image and decomposes it into editable layers — background, illustration, text, all separable. Once it's layered, it's not a generation anymore; it's a file. The background knockout becomes a click. The gibberish labels get replaced with real text in real typography. The thing ChatGPT couldn't do in twenty re-rolls, Canva did in ninety seconds.

The pattern: Here's what I want you to notice. The specific tools I used today will probably be wrong in a month — but not because everything got easier. Tools will still be good at one thing at a time. That part isn't going anywhere; it's the underlying physics of how these models get built. What will change is the cast. A new image model that suddenly handles small edits will redraw the boundary between "generator" and "editor." Better integrations between tools will collapse two steps into one. Someone might build an automated chain for exactly this kind of pipeline (though I'm skeptical there's a real business in cheese-plate orchestration). Gemini might make a comeback and quietly become the best image model on the market by August. Any of these would mean a different stack — but a stack, not a single tool that does everything.

But the thinking doesn't churn. The skill is noticing when you've hit a wall, naming what kind of operation you actually need next (read this? render this? edit this?), and being willing to move tools the moment the current one stops earning its keep. That skill works regardless of which model is winning this week. It's a troubleshooting muscle, not a tool-specific habit.

What it bought me

I printed the guide Saturday night. Laminated it, because we were going to be outside and I have standards. We laid it on the blanket Sunday. The spread was epic enough that strangers walking past asked to take photos.

For four hours, nobody at our blanket needed a phone for anything. Nobody needed to look up what the Zimbro was. Nobody needed to remember whether Noa eats this one or only the other one. The AI part had already happened, the night before. By Sunday it had quietly become a piece of paper.

The funniest part of the day was the path. We kept noticing other visitors stopping, pulling out phones, photographing something we couldn't see from where we were sitting. What were they looking at? So we started sending scouts down the path two at a time to investigate.

Nobody brought a phone. Everybody came back saying the rhododendrons on the China Path were absolutely unreal. No photos. No proof. Just: go look.

No hate to the people with phones — the rhododendrons were beautiful and the photos probably were too. But discovering it on foot, two at a time, with someone walking back grinning and saying "trust me, go" — that was a better version of the same afternoon.

That's the trade I want from AI. Do the prep work the night before. Disappear by morning. Leave me with a piece of paper on a blanket and four hours I don't have to manage.

The Arboretum opens for picnics for one day. The kids are this age exactly once.

We will absolutely be back next year, with some new level of picnic we cannot yet conceive of. We will probably brainstorm it with AI.

Eight cheeses, minimum.

I'm the CTO and co-founder of Hold My Juice, where we're building AI that handles the invisible logistics of family life so parents can be present for the parts that actually matter. The cheese plate is the small version. The product is the every-day version. Join the beta waitlist →